The AI Full Stack

Understanding the Building Blocks of AI: From Data to Deployment and a List of Related Opportunities

Disclaimer: The views expressed in this article are personal and should not be considered investment advice.

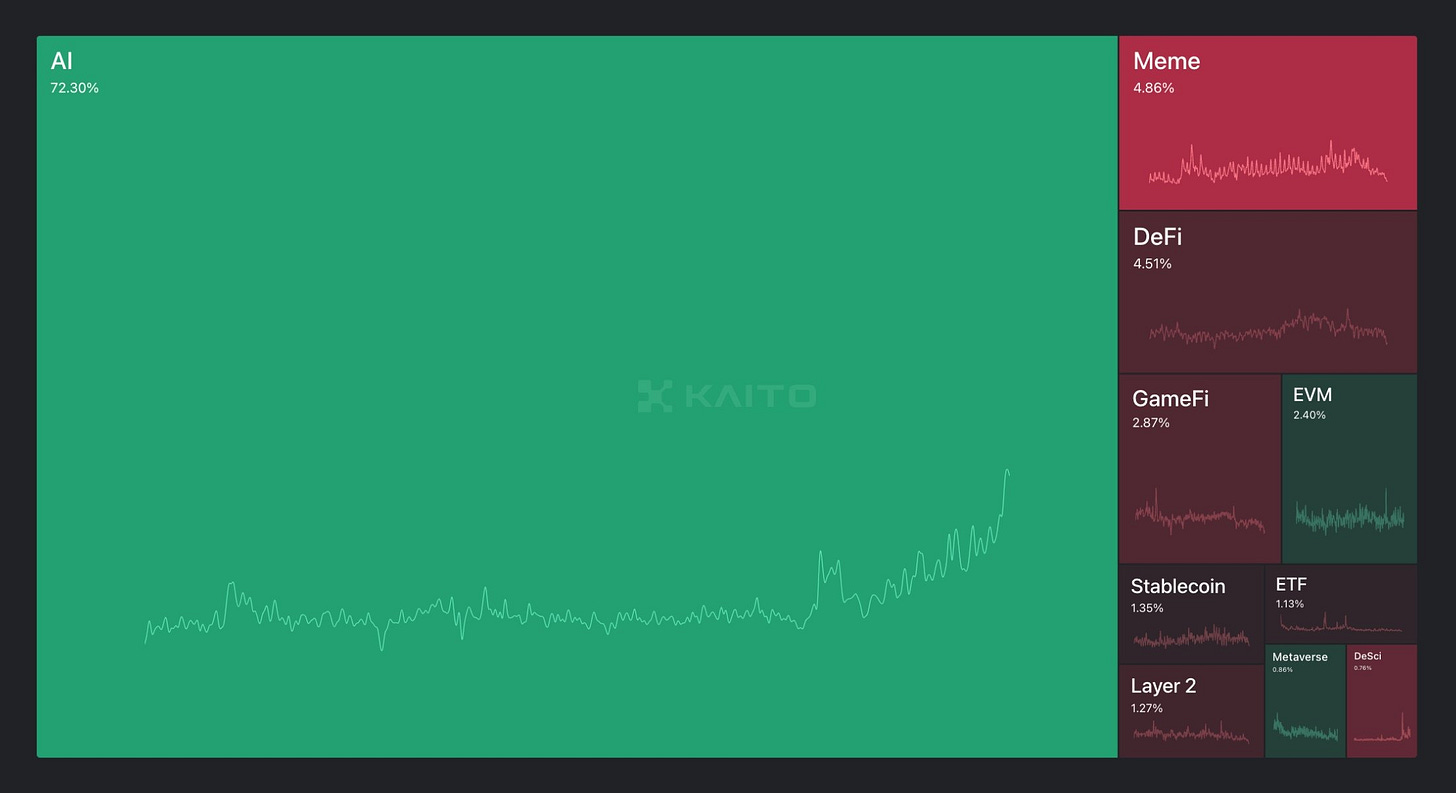

Ever since the mass introduction of ChatGPT, AI (Artificial Intelligence) has taken the world by storm. News headlines displayed a mixed sentiment, “AI is replacing human tasks faster than you think” or “5 Reasons You Should Love AI”. On CT (Crypto Twitter), it’s clear that AI has emerged triumphant. AI mindshare has reached all-time highs, taking over long-standing verticles like memecoins and DeFi. This article will focus on how AI works and the opportunities that serve AI in the space.

Artificial Intelligence

Initially, humans used simple code to perform tasks; if this condition is met, perform this action. However, the problems we must solve have become too complex to simplify into writing. The universe is interconnected and full of patterns that cannot be explained with a simple formula That’s where AI comes in; AI is great at approximating functions and patterns if they exist in the problem statement. Currently, AI solves complicated problems such as supply change optimisation, climate modelling, personalising healthcare, and more.

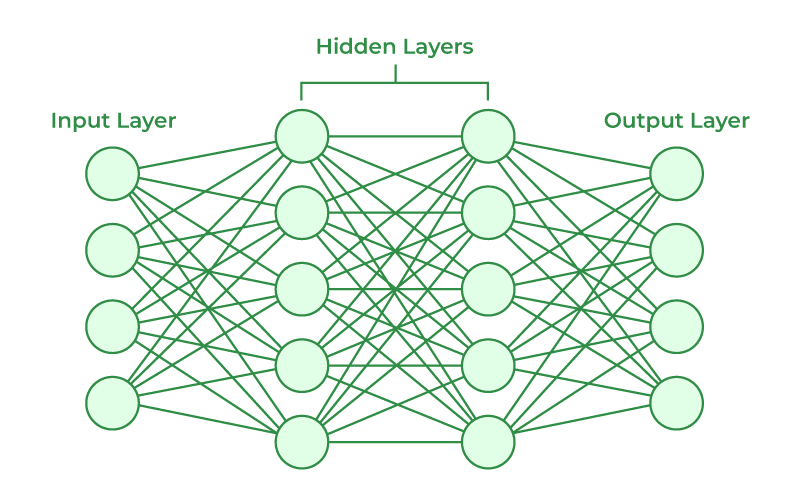

AI comprises a network of nodes, reflecting the design of the human brain, a network of synapses and neurons. Just like neurons, nodes turn an input into an output. Each line of nodes is called a layer and is connected via linkages written in code. Each node performs a certain function and can adjust the amount of information that passes through it to the next node. Generally, the more complex (more layers) a model, the more capable it is.

It is arduous for humans to write such complex solutions in code, for that reason, we first build a bot to build bots. At a high level, there are 2 bots which serve different functions:

Builder Bot: Randomly alters the actions of nodes and connections between nodes.

Teacher Bot: Grades the bots Builder Bot builds by referencing an answer key we humans provide.

Builder Bot randomly builds thousands of bots and sends them to Teacher Bot to get graded. The test is thousands of questions long and the worse-performing bots are discarded and the better performers are kept and tweaked by Builder Bot. Over time there’s bound to be one combination that will be useful after random changes in wiring. This loop happens infinite times until the ULTIMATE bot is made. Additionally, having more data results in longer tests which leads to better bots.

The only thing that affected how the bot came together was the data used in the test and how the human(s) designed the test to direct what was right and wrong.

Also, it is worth noting that when given a set of inputs and outputs that reflect the equation y=x+1, a human quickly understands this as a simple rule: "Add 1 to the input to get the output." In contrast, an AI model doesn't inherently "understand" this rule in the way humans do. Instead, it learns to approximate the relationship between the inputs and outputs by adjusting the weights of its linkages through training.

The AI doesn't "know" that adding 1 is the correct operation. It discovers a combination of weights and biases across its layers that produce outputs close to y=x+1 for the given inputs. This process might involve creating a function far more complex than the simple addition humans intuitively recognize. As a result, the AI doesn't explicitly encode the idea of "adding 1"; it merely captures the pattern of the relationship through trial and error during its training phase.

For a more fun explanation, watch this. For a more in-depth explanation of AI, watch this.

The AI Full Stack

The AI Full Stack encompasses the essential components required to train, deploy, and maintain AI models. There are different layers and considerations when building a functional and scalable AI pipeline. Here is a breakdown of the stack with the purpose and some crypto projects related to each layer.

(Sharing of protocols should not form the basis for making investment decisions, nor be construed as a recommendation or advice to engage in investment transactions.)

Data

Data is the lifeblood of AI, serving as the critical input that enables models to learn patterns, make predictions, and improve over time. To begin, raw data has to be collected. This depends on the specific problem the AI is being trained to solve. This information can come from a variety of sources, such as sensors, APIs, user interactions, or other primary inputs.

Once collected, the data has to be processed to ensure it is suitable for training. This involves cleaning the data to remove inconsistencies or noise, labelling it to provide examples of correct outputs for given inputs, and formatting it to align with the requirements of the AI model. Additionally, feature engineering plays a crucial role in this stage, where key variables are identified and optimized to enhance model performance. Common operations in feature engineering include selecting the most relevant features, scaling or normalizing numerical values, and creating new variables by combining existing ones.

Finally, the processed data must be stored securely for future use. Databases are essential for efficient access, management, and updating of data, enabling iterative improvements in the AI model over time. Proper handling of data across these stages is vital to building robust and reliable AI systems.

Data Collection: $GRASS, $OCEAN, $LAKE

Data Processing: Sahara AI

Storage: $SHDW, $FIL, $AR, and more.

Compute Power

Large computational resources are the backbone of AI training and deployment. GPUs (Graphics Processing Units) are the most common hardware used due to their ability to perform multiple, simultaneous computations making them ideal for speeding up machine learning tasks. There has been a rise in demand for cloud-based solutions from providers like AWS, Google Cloud, and Microsoft Azure as they offer customers the flexibility to scale AI operations without the huge upfront costs. This layer is critical for running large-scale experiments, training deep learning models, and performing inference efficiently.

Cloud-Solutions: $XMW, $IO, $RENDER

Model Development

After securing data and computational resources, model development is the next critical step in the AI pipeline. This layer involves designing, training, and refining AI models for accuracy and performance. Key components include machine learning frameworks which provide tools to build and optimise models. Pre-trained models like GPT often serve as a foundation for custom models through transfer learning. Programming languages like Python are widely used alongside specialised libraries and Software Development Kits (SDKs), which simplify integrating AI capabilities into applications. Other tooling includes experimentation platforms which help track and optimise the development process by visualising results and comparing iterations.

Frameworks: $ARC, $ai16z, $SNAI, $SWARMS, and more.

AI Launchpads & Agents

This is the application layer of AI, where models are deployed and interacted with in real-world scenarios. AI agents are intelligent systems designed to execute specific tasks, such as chatbots, recommendation engines, and virtual assistants. Inference engines (What are these?) play a crucial role by converting trained models into deployable applications that can perform predictions in real time. Deployment platforms or virtual environments ensure scalability and availability. This layer bridges the gap between the backend processes and the end-users, making the trained models accessible and actionable.

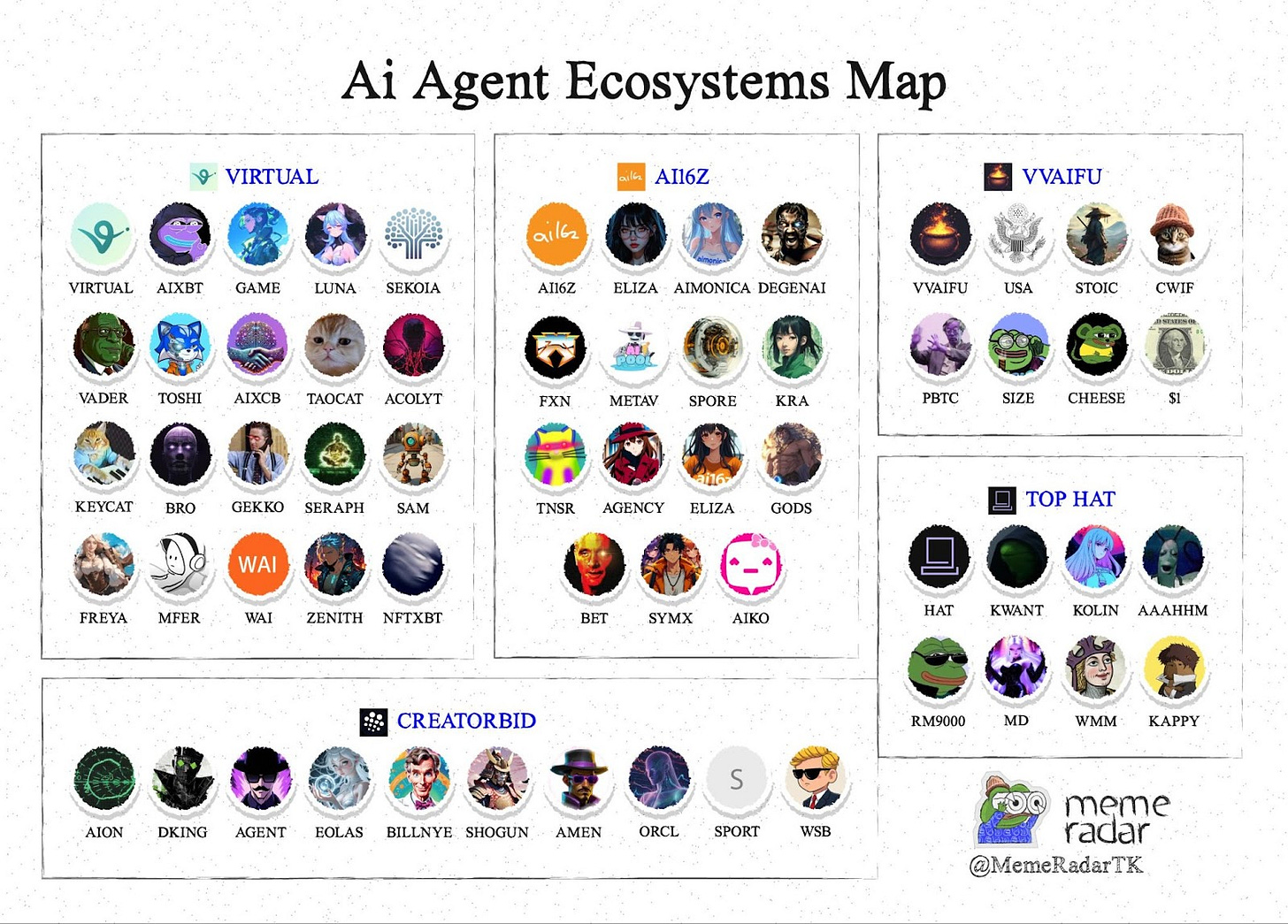

Launchpads: $GRIFFAIN, $ALCH, $VIRTUAL, and more.

AI Agents: $AGENCY, $AIXBT, $VADER, and more.

Monitoring and Maintenance

Once deployed, AI models require continuous monitoring and maintenance to ensure they operate effectively. This layer involves setting up data pipelines that automate the flow of data from collection to model consumption, enabling consistent performance. Monitoring tools track key metrics like accuracy, latency, and data drift to detect and address issues in real time. Maintenance tasks may include retraining models with updated data, debugging errors, and enhancing robustness against changing conditions. This ensures the AI system remains reliable and aligned with its intended purpose over time.

Compliance

Compliance includes both ethical and regulatory compliance. Currently, there is not much concern for compliance. However, this aspect is critical to gaining public trust which would be a key challenge for mass adoption. Being compliant ensures responsible AI use and addresses concerns about fairness, transparency, and accountability. Without this regard, AI systems risk perpetuating biases or violating user rights, leading to harmful outcomes.

I'm not familiar with any protocols that specialize specifically in AI compliance, likely due to the comprehensive regulation being in its early stages. However, many jurisdictions are actively developing legal frameworks to address the unique opportunities and challenges presented by AI technologies, making this an area of potential interest and future development.

Below is a list of protocols that use AI to complement their compliance processes.

Conclusion

AI has revolutionised the way we approach complex problems, enabling us to leverage vast amounts of data, powerful computational resources, and advanced algorithms to create systems that learn, adapt, and transform industries. The AI Full Stack highlights the essential components needed to build, deploy, and maintain these intelligent systems. From collecting and processing data to deploying AI agents and ensuring ethical compliance, each layer plays a critical role in building scalable, functional AI solutions.

Yet, we are only scratching the surface of AI’s potential. With rapid advancements in research and technology, the future holds exciting possibilities. We may soon see breakthroughs that fundamentally reshape how AI systems are developed, perhaps even leading to lab-grown AIs. The road ahead is both unpredictable and thrilling, as AI evolves to tackle challenges we haven’t even imagined yet.

As AI continues to evolve, it is essential to address challenges such as fairness, transparency, and regulatory compliance to foster public trust and ensure its responsible use. The potential of AI is immense, but its success depends on balancing technological innovation with ethical considerations and practical applications. Whether in crypto, healthcare, or beyond, the opportunities are limitless, and understanding its stack is the first step to navigating this transformative space effectively.